Un equipo profesional a tu servicio

Podemos solucionar cualquier problema en tu página web

Poseemos la experiencia, el conocimiento especializado y las herramientas avanzadas necesarias para la gestión integral de tu página web o tienda online. Desde la conceptualización hasta la ejecución, estamos comprometidos con la excelencia en el servicio y la solución eficaz de cualquier desafío que se presente en tu plataforma digital.

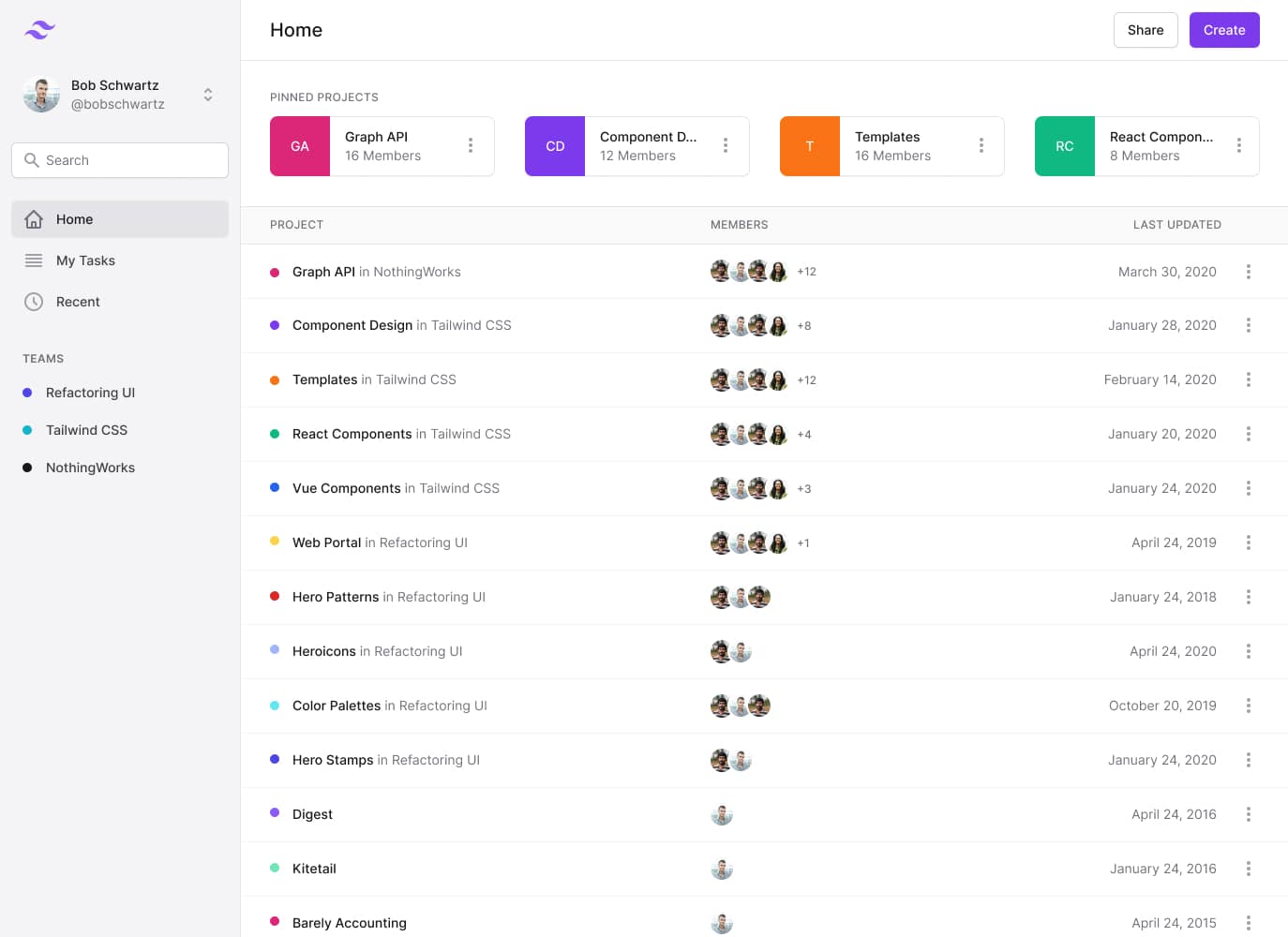

4.7/5 Leer opiniones sobre Php Ninja en Google reviews.Descubre todos nuestros servicios para WordPress, Prestashop y Drupal